Welcome OpenAI’s GPT-OSS to ShareAI

ShareAI is committed to bringing you the latest and most powerful AI models—and we’re doing it again today.

Hours ago, OpenAI released its groundbreaking GPT-OSS models, and you can already start using them within the ShareAI network!

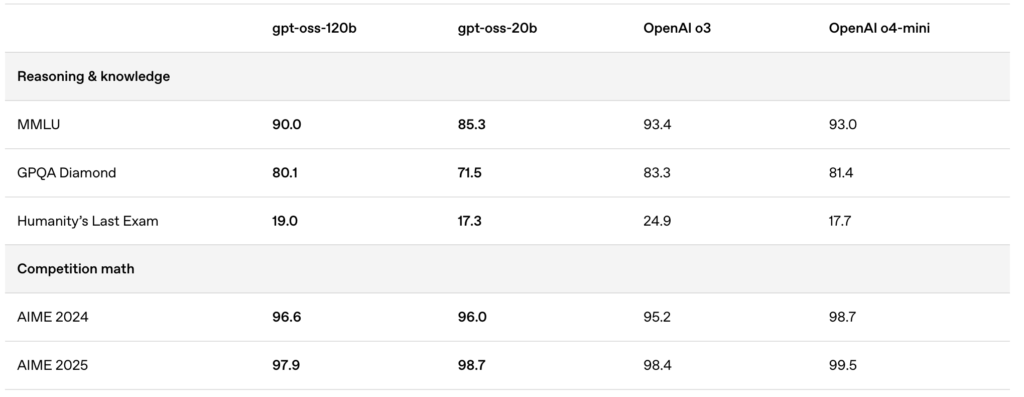

The newly released models—gpt-oss:20b and gpt-oss:120b—deliver exceptional local chat experiences, powerful reasoning capabilities, and enhanced support for advanced developer scenarios.

🚀 Get Started with GPT-OSS on ShareAI

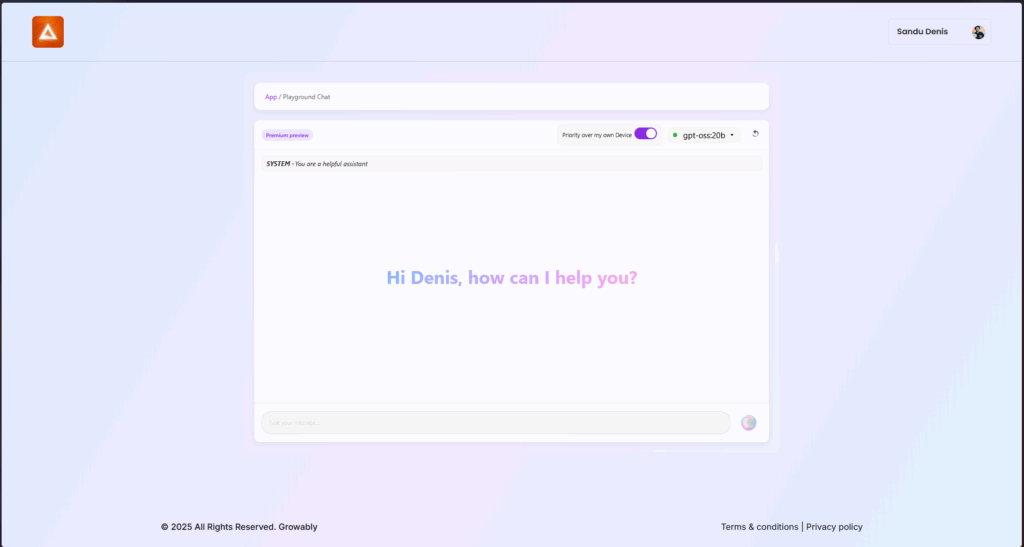

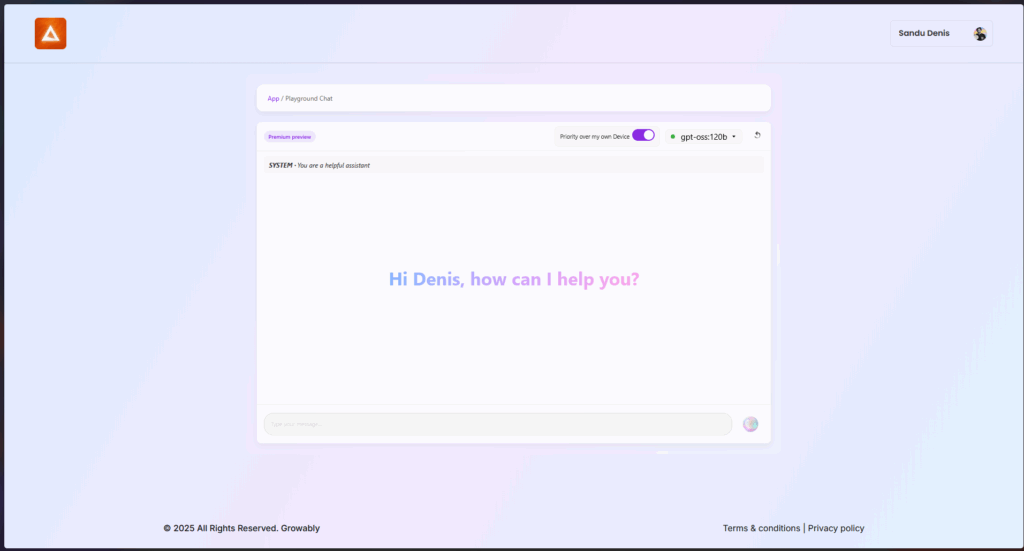

You can try these models right now using the latest version of ShareAI’s Windows client.

- Download ShareAI for Windows (link to the download page)

After installation, you can easily download and run the GPT-OSS models directly from the ShareAI app—no complicated setups or configurations required.

✨ GPT-OSS Feature Highlights

Here’s why GPT-OSS is a game-changer for your AI-driven workflows:

- Agentic capabilities:

Leverage built-in features for function calling, web browsing, Python tool calls, and structured data outputs. - Full chain-of-thought transparency:

Gain clear visibility into the model’s reasoning process, enabling easier debugging and greater trust in generated results. - Configurable reasoning levels:

Easily adjust reasoning effort (low, medium, high) based on your latency and complexity needs. - Fine-tunable architecture:

Customize models extensively with parameter fine-tuning for specific use-cases. - Permissive Apache 2.0 License:

Innovate freely without restrictive copyleft licenses or patent risks—perfect for commercial deployment and rapid experimentation.

🔥 Optimized with MXFP4 Quantization

OpenAI has introduced MXFP4 quantization, significantly reducing the memory footprint of GPT-OSS models:

- MXFP4 Format Explained:

GPT-OSS models utilize a quantization technique where Mixture-of-Experts (MoE) weights are quantized down to just 4.25 bits per parameter. These MoE weights comprise over 90% of the total model parameters, making quantization incredibly efficient. - Enhanced Compatibility:

This optimized quantization allows:- The gpt-oss:20b model to run smoothly on machines with as little as 16GB memory.

- The gpt-oss:120b model to fit comfortably on a single 80GB GPU.

ShareAI supports this MXFP4 format natively—no extra steps, conversions, or hassles required. Our latest engine update comes with newly developed kernels specifically designed for the MXFP4 format, ensuring peak performance.

📌 GPT-OSS Models Available Now:

GPT-OSS:20B

- Ideal for low-latency, specialized tasks, or local deployments.

- Offers robust performance even on modest hardware setups.

GPT-OSS:120B

- Delivers powerful reasoning, advanced agentic capabilities, and versatility suited for demanding tasks at scale.

- Perfect for developers and enterprises requiring deeper reasoning, higher accuracy, and broader AI applications.

🛠️ Try GPT-OSS today

Ready to experience next-gen AI capabilities?

- Download ShareAI Windows Client (link to download page)

- View Documentation (link to user docs or tutorials)

Stay tuned—ShareAI continues to expand our model library, empowering your AI projects every step of the way.

Happy prompting! 🚀