Arch Gateway Alternatives 2026: Top 10

Updated April 2026

If you’re evaluating Arch Gateway alternatives, this guide maps the landscape like a builder would. First, we clarify what Arch Gateway is—a prompt-aware gateway for LLM traffic and agentic apps—then compare the 10 best alternatives. We place ShareAI first for teams that want one API across many providers, pre-route transparency (price, latency, uptime, availability) before routing, instant failover, and people-powered economics (70% of spend goes to providers).

Quick links — Browse Models · Open Playground · Create API Key · API Reference · User Guide · Releases

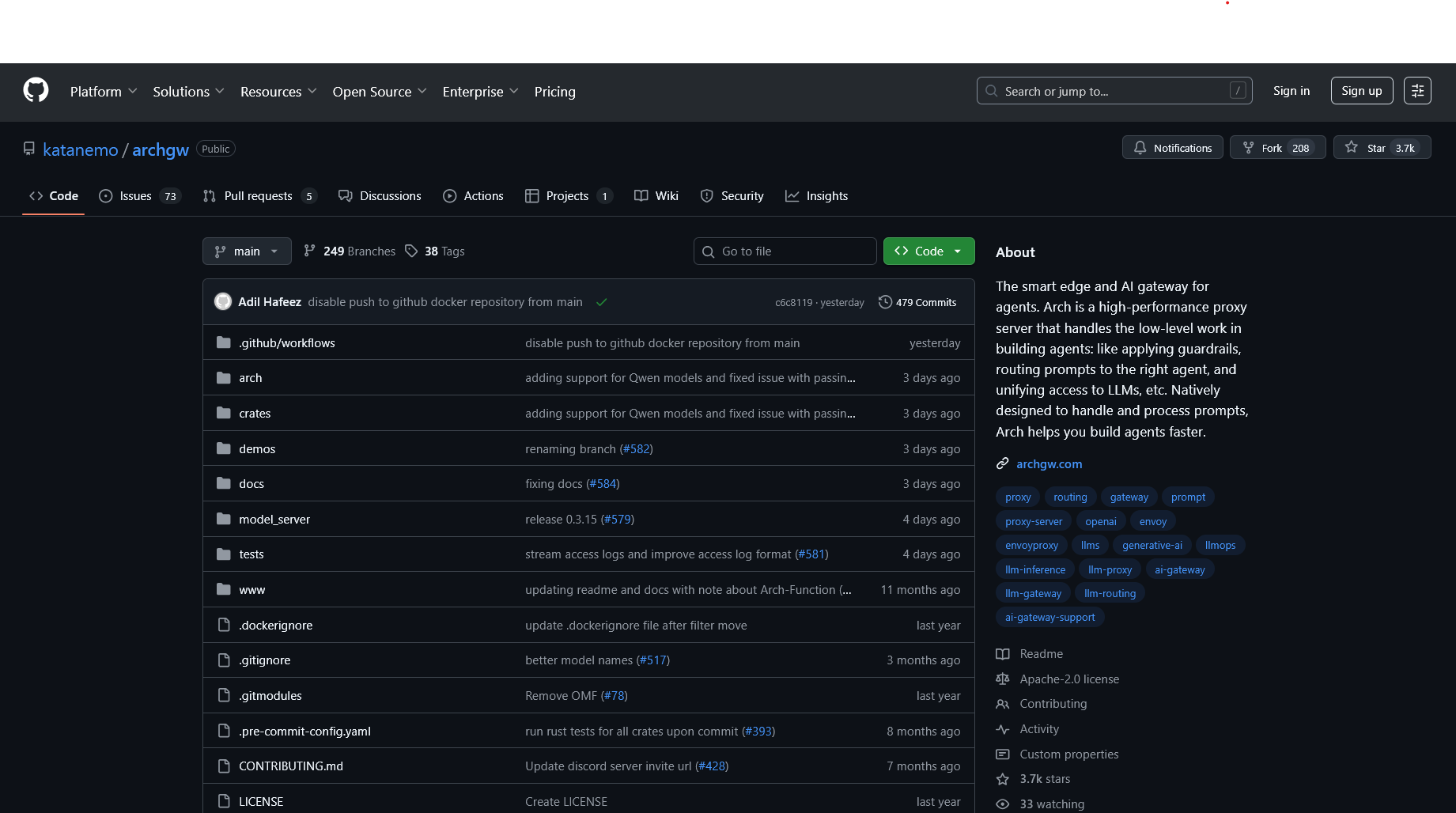

What Arch Gateway is (and isn’t)

What it is. Arch Gateway (often shortened to “Arch” / archgw) is an AI-aware gateway for agentic apps. It sits at the edge of your stack to apply guardrails, normalize/clarify inputs, route prompts to the right tool or model, and unify access to LLMs—so your app can focus on business logic instead of infrastructure plumbing.

What it isn’t. Arch is a governance-and-routing layer for prompts and agents; it’s not a transparent model marketplace that shows provider price, latency, uptime, availability before you route. That’s where ShareAI shines.

Aggregators vs Gateways vs Agent platforms

- LLM aggregators — One API across many models/providers with pre-route transparency (price, latency, uptime, availability, provider type) and smart routing/failover. Example: ShareAI.

- AI gateways — Edge governance for LLM traffic (keys, policies, rate limits, guardrails) plus observability. You bring your providers. Examples: Arch Gateway, Kong AI Gateway, Portkey.

- Agent/chatbot platforms — Packaged UX (memory/tools/channels) for assistants; closer to product than infra. Example: Orq.

How we evaluated the best Arch Gateway alternatives

- Model breadth & neutrality — proprietary + open; easy switching; no rewrites.

- Latency & resilience — routing policies, timeouts/retries, instant failover.

- Governance & security — key handling, scopes, regional routing, guardrails.

- Observability — logs/traces and cost/latency dashboards.

- Pricing transparency & TCO — compare real costs before you route.

- Developer experience — docs, SDKs, quickstarts; time-to-first-token.

- Community & economics — whether your spend grows supply (incentives for GPU owners).

Top 10 Arch Gateway alternatives

#1 — ShareAI (People-Powered AI API)

What it is. A multi-provider API with a transparent marketplace and smart routing. With one integration, browse a large catalog of models and providers, compare price, latency, uptime, availability, provider type, and route with instant failover.

Why it’s #1 here. If you want provider-agnostic aggregation with pre-route transparency and resilience, ShareAI is the most direct fit. Keep a gateway if you need org-wide policies; add ShareAI for marketplace-guided routing.

- One API → 150+ models across many providers; no rewrites, no lock-in.

- Transparent marketplace: choose by price, latency, uptime, availability, provider type.

- Resilience by default: routing policies + instant failover.

- Fair economics: 70% of spend goes to providers (community or company).

Quick links — Browse Models · Open Playground · Create API Key · API Reference · User Guide

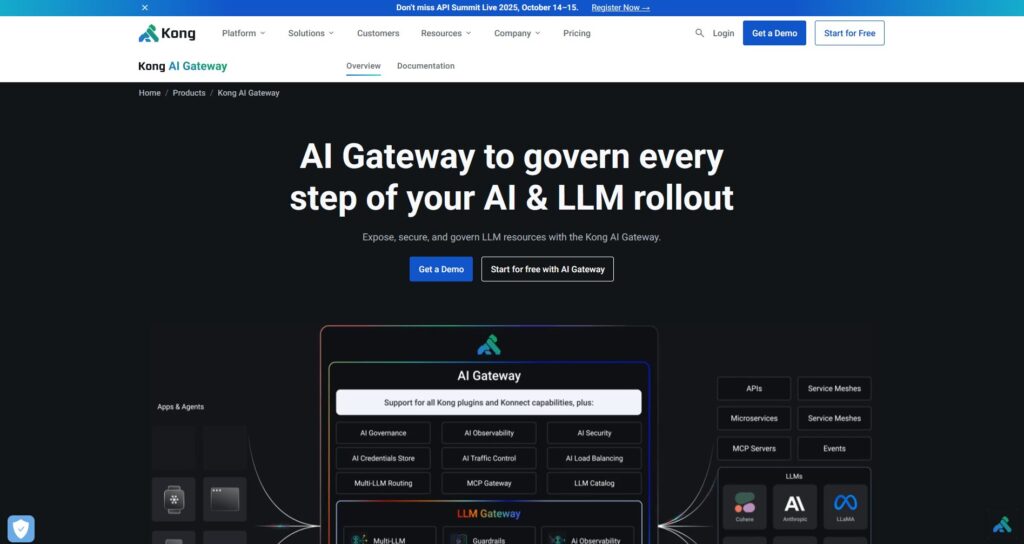

#2 — Kong AI Gateway

What it is. Enterprise AI/LLM gateway—governance, policies/plugins, analytics, and observability for AI traffic at the edge. It’s a control plane rather than a marketplace.

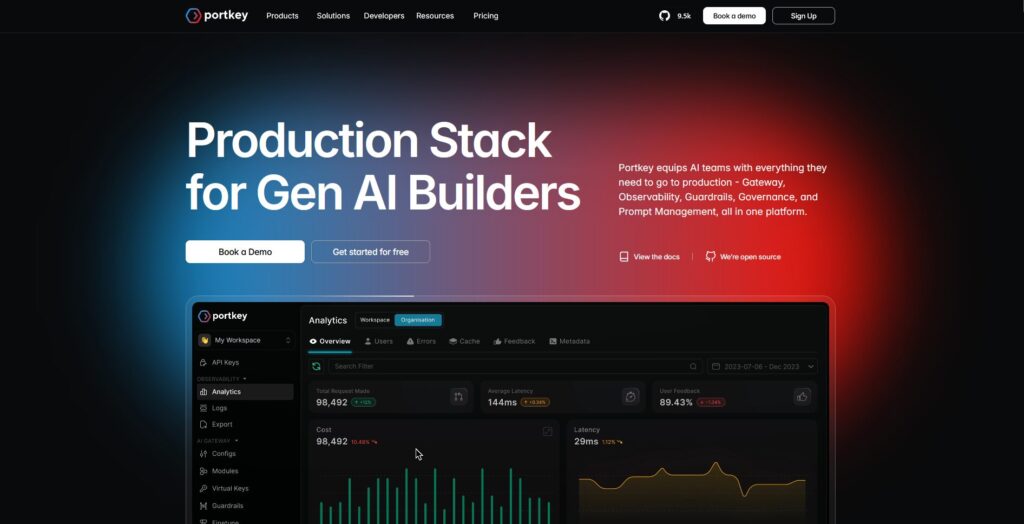

#3 — Portkey

What it is. AI gateway emphasizing guardrails and observability—popular in regulated environments.

#4 — OpenRouter

What it is. Unified API over many models; great for fast experimentation across a wide catalog.

#5 — Eden AI

What it is. Aggregates LLMs plus broader AI capabilities (vision, translation, TTS), with fallbacks/caching and batching.

#6 — LiteLLM

What it is. A lightweight Python SDK + self-hostable proxy that speaks an OpenAI-compatible interface to many providers.

#7 — Unify

What it is. Quality-oriented routing and evaluation to pick better models per prompt.

#8 — Orq AI

What it is. Orchestration/collaboration platform that helps teams move from experiments to production with low-code flows.

#9 — Apigee (with LLMs behind it)

What it is. A mature API management/gateway you can place in front of LLM providers to apply policies, keys, and quotas.

#10 — NGINX

What it is. Use NGINX to build custom routing, token enforcement, and caching for LLM backends if you prefer DIY control.

Arch Gateway vs ShareAI

If you need one API over many providers with transparent pricing/latency/uptime/availability and instant failover, choose ShareAI. If your top requirement is egress governance—centralized credentials, policy enforcement, and prompt-aware routing—Arch Gateway fits that lane. Many teams pair them: gateway for org policy + ShareAI for marketplace routing.

Quick comparison

| Platform | Who it serves | Model breadth | Governance & security | Observability | Routing / failover | Marketplace transparency | Provider program |

|---|---|---|---|---|---|---|---|

| ShareAI | Product/platform teams needing one API + fair economics | 150+ models, many providers | API keys & per-route controls | Console usage + marketplace stats | Smart routing + instant failover | Yes (price, latency, uptime, availability, provider type) | Yes — open supply; 70% to providers |

| Arch Gateway | Teams building agentic apps needing prompt-aware edge | BYO providers | Guardrails, keys, policies | Tracing/observability for prompts | Conditional routing to agents/tools | No (infra tool, not a marketplace) | n/a |

| Kong AI Gateway | Enterprises needing gateway-level policy | BYO | Strong edge policies/plugins | Analytics | Retries via plugins | No | n/a |

| Portkey | Regulated/enterprise teams | Broad | Guardrails & governance | Deep traces | Conditional routing | Partial | n/a |

| OpenRouter | Devs wanting one key | Wide catalog | Basic API controls | App-side | Fallbacks | Partial | n/a |

| Eden AI | Teams needing LLM + other AI services | Broad | Standard controls | Varies | Fallbacks/caching | Partial | n/a |

| LiteLLM | DIY/self-host proxy | Many providers | Config/key limits | Your infra | Retries/fallback | n/a | n/a |

| Unify | Quality-driven teams | Multi-model | Standard API security | Platform analytics | Best-model selection | n/a | n/a |

| Orq | Orchestration-first teams | Wide support | Platform controls | Platform analytics | Orchestration flows | n/a | n/a |

| Apigee / NGINX | Enterprises / DIY | BYO | Policies | Add-ons / custom | Custom | n/a | n/a |

Pricing & TCO: compare real costs (not just unit prices)

Raw $/1K tokens hides the true picture. TCO shifts with retries/fallbacks, latency (affects usage), provider variance, observability storage, and evaluation runs. A transparent marketplace helps you choose routes that balance cost and UX.

TCO ≈ Σ (Base_tokens × Unit_price × (1 + Retry_rate))

+ Observability_storage

+ Evaluation_tokens

+ Egress

- Prototype (~10k tokens/day): Optimize for time-to-first-token (Playground, quickstarts).

- Mid-scale (~2M tokens/day): Marketplace-guided routing/failover can trim 10–20% while improving UX.

- Spiky workloads: Expect higher effective token costs from retries during failover; budget for it.

Migration guide: moving to ShareAI

From Arch Gateway

Keep gateway-level policies where they shine, add ShareAI for marketplace routing + instant failover. Pattern: gateway auth/policy → ShareAI route per model → measure marketplace stats → tighten policies.

From OpenRouter

Map model names, verify prompt parity, then shadow 10% of traffic and ramp 25% → 50% → 100% as latency/error budgets hold. Marketplace data makes provider swaps straightforward.

From LiteLLM

Replace the self-hosted proxy on production routes you don’t want to operate; keep LiteLLM for dev if desired. Compare ops overhead vs. managed routing benefits.

From Unify / Portkey / Orq / Kong

Define feature-parity expectations (analytics, guardrails, orchestration, plugins). Many teams run hybrid: keep specialized features where they’re strongest; use ShareAI for transparent provider choice and failover.

Developer quickstart (copy-paste)

The following use an OpenAI-compatible surface. Replace YOUR_KEY with your ShareAI key — get one at Create API Key. See the API Reference for details.

#!/usr/bin/env bash

# cURL (bash) — Chat Completions

# Prereqs:

# export SHAREAI_API_KEY="YOUR_KEY"

curl -X POST "https://api.shareai.now/v1/chat/completions" \

-H "Authorization: Bearer $SHAREAI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "llama-3.1-70b",

"messages": [

{ "role": "user", "content": "Give me a short haiku about reliable routing." }

],

"temperature": 0.4,

"max_tokens": 128

}'

// JavaScript (fetch) — Node 18+/Edge runtimes

// Prereqs:

// process.env.SHAREAI_API_KEY = "YOUR_KEY"

async function main() {

const res = await fetch("https://api.shareai.now/v1/chat/completions", {

method: "POST",

headers: {

"Authorization": `Bearer ${process.env.SHAREAI_API_KEY}`,

"Content-Type": "application/json"

},

body: JSON.stringify({

model: "llama-3.1-70b",

messages: [

{ role: "user", content: "Give me a short haiku about reliable routing." }

],

temperature: 0.4,

max_tokens: 128

})

});

if (!res.ok) {

console.error("Request failed:", res.status, await res.text());

return;

}

const data = await res.json();

console.log(JSON.stringify(data, null, 2));

}

main().catch(console.error);

Security, privacy & compliance checklist (vendor-agnostic)

- Key handling: rotation cadence; minimal scopes; environment separation.

- Data retention: where prompts/responses are stored, for how long; redaction defaults.

- PII & sensitive content: masking; access controls; regional routing for data locality.

- Observability: prompt/response logging; ability to filter or pseudonymize; propagate trace IDs consistently.

- Incident response: escalation paths and provider SLAs.

FAQ — Arch Gateway vs other competitors

Arch Gateway vs ShareAI — which for multi-provider routing?

ShareAI. It’s built for marketplace transparency (price, latency, uptime, availability, provider type) and smart routing/failover across many providers. Arch Gateway is a prompt-aware governance/routing layer (guardrails, agent routing, unified LLM access). Many teams use both.

Arch Gateway vs OpenRouter — quick multi-model access or gateway controls?

OpenRouter gives quick multi-model access; Arch centralizes policy/guardrails and agent routing. If you also want pre-route transparency and instant failover, ShareAI combines multi-provider access with a marketplace view and resilient routing.

Arch Gateway vs Traefik AI Gateway — thin AI layer or marketplace routing?

Both are gateways (credentials/policies; observability). If the goal is provider-agnostic access with transparency and failover, add ShareAI.

Arch Gateway vs Kong AI Gateway — two gateways

Both are gateways (policies/plugins/analytics), not marketplaces. Many teams pair a gateway with ShareAI for transparent multi-provider routing and failover.

Arch Gateway vs Portkey — who’s stronger on guardrails?

Both emphasize governance and observability; depth and ergonomics differ. If your main need is transparent provider choice and failover, add ShareAI.

Arch Gateway vs Unify — best-model selection vs policy enforcement?

Unify focuses on evaluation-driven model selection; Arch on guardrails + agent routing. For one API over many providers with live marketplace stats, use ShareAI.

Arch Gateway vs Eden AI — many AI services or egress control?

Eden AI aggregates several AI services (LLM, image, TTS). Arch centralizes policy/credentials and agent routing. For transparent pricing/latency across many providers and instant failover, choose ShareAI.

Arch Gateway vs LiteLLM — self-host proxy or managed gateway?

LiteLLM is a DIY proxy you operate; Arch is a managed, prompt-aware gateway. If you’d rather not run a proxy and want marketplace-driven routing, choose ShareAI.

Arch Gateway vs Orq — orchestration vs egress?

Orq orchestrates workflows; Arch governs prompt traffic and agent routing. ShareAI complements either with transparent provider selection.

Arch Gateway vs Apigee — API management vs AI-specific egress

Apigee is broad API management; Arch is LLM/agent-focused egress governance. Need provider-agnostic access with marketplace transparency? Use ShareAI.

Arch Gateway vs NGINX — DIY vs turnkey

NGINX offers DIY filters/policies; Arch offers packaged, prompt-aware gateway features. To avoid custom scripting and still get transparent provider selection, layer in ShareAI.

For providers: earn by keeping models online

Anyone can become a ShareAI provider—Community or Company. Onboard via Windows, Ubuntu, macOS, or Docker. Contribute idle-time bursts or run always-on. Choose your incentive: Rewards (money), Exchange (tokens/AI Prosumer), or Mission (donate a % to NGOs). As you scale, you can set your own inference prices and gain preferential exposure.

Provider links — Provider Guide · Provider Dashboard · Exchange Overview · Mission Contribution

Try ShareAI next

- Open Playground — test models live, compare latency & quality.

- Create your API key — start routing in minutes.

- Browse Models — choose by price, latency, uptime, availability, provider type.

- Read the Docs — quickstarts and references.

- Sign in / Sign up