WSO2 Alternatives 2026: Top 10

Updated April 2026

If you’re evaluating WSO2 alternatives, this guide maps the landscape the way a builder would. We start by clarifying where a gateway like WSO2 fits—governance at the edge, policy enforcement, and observability for AI/LLM traffic—then compare the 10 best WSO2 AI Gateway alternatives. We place ShareAI first for teams that want one API across many providers, a transparent marketplace showing price, latency, uptime, and availability before routing, instant failover, and people-powered economics (70% of spend goes to providers).

Quick links — Browse Models · Open Playground · Create API Key · API Reference · User Guide · Releases

What WSO2 AI Gateway is (and isn’t)

WSO2’s AI/Gateway approach is rooted in classic API management: centralized credentials, policy controls, and observability for traffic you send to the models you choose. That’s a governance-first control plane—you bring your providers and enforce rules at the edge—rather than a transparent model marketplace that helps you compare providers and route intelligently across many of them.

If your top priority is organization-wide governance, a gateway makes sense. If you want provider-agnostic access with pre-route transparency and automatic failover, look at an aggregator/marketplace such as ShareAI—or run the two side-by-side.

Aggregators vs Gateways vs Agent platforms

- LLM Aggregators / Marketplaces. One API over many models/providers with pre-route transparency (price, latency, uptime, availability, provider type) and smart routing/failover. Example: ShareAI.

- AI Gateways. Governance at the edge (keys, rate limits, guardrails), plus observability; you supply the providers. Examples: WSO2, Kong, Portkey.

- Agent/Chatbot platforms. Packaged UX (chat, tools, memory, channels) aimed at end-user assistants, not provider-agnostic aggregation. Example: Orq (orchestration-first).

How we evaluated the best WSO2 alternatives

- Model breadth & neutrality. Proprietary and open models; easy switching without rewrites.

- Latency & resilience. Routing policies, timeouts, retries, instant failover.

- Governance & security. Key handling, scopes, regional routing, guardrails.

- Observability. Logs/traces and cost/latency dashboards.

- Pricing transparency & TCO. Compare real costs before you route.

- Developer experience. Clear docs, SDKs, quickstarts; time-to-first-token.

- Community & economics. Does your spend grow supply (incentives for GPU owners/providers)?

Top 10 WSO2 Alternatives

#1 — ShareAI (People-Powered AI API)

What it is. A multi-provider API with a transparent marketplace and smart routing. With one integration, browse a large catalog of models and providers, compare price, latency, uptime, availability, and provider type, and route with instant failover. Economics are people-powered: 70% of every dollar flows to providers (community or company) who keep models online.

Why it’s #1 here. If you want provider-agnostic aggregation with pre-route transparency and resilience by default, ShareAI is the most direct fit. Keep any gateway you already use for org-wide policies; add ShareAI for marketplace-guided routing.

- One API → 150+ models across many providers; no rewrites, no lock-in. → Browse Models

- Transparent marketplace: choose by price, latency, uptime, availability, provider type.

- Resilience by default: routing policies + instant failover.

- Fair economics: 70% of spend goes to providers (community or company).

- Builder-friendly: Open Playground · API Reference · Create API Key

For providers: earn by keeping models online

Anyone can become a ShareAI provider—Community or Company—and onboard via Windows, Ubuntu, macOS, or Docker. Contribute idle-time bursts or run always-on. Choose your incentive: Rewards (money), Exchange (tokens / AI Prosumer), or Mission (donate a % to NGOs). As you scale, you can set your own inference prices and gain preferential exposure. → Provider Guide

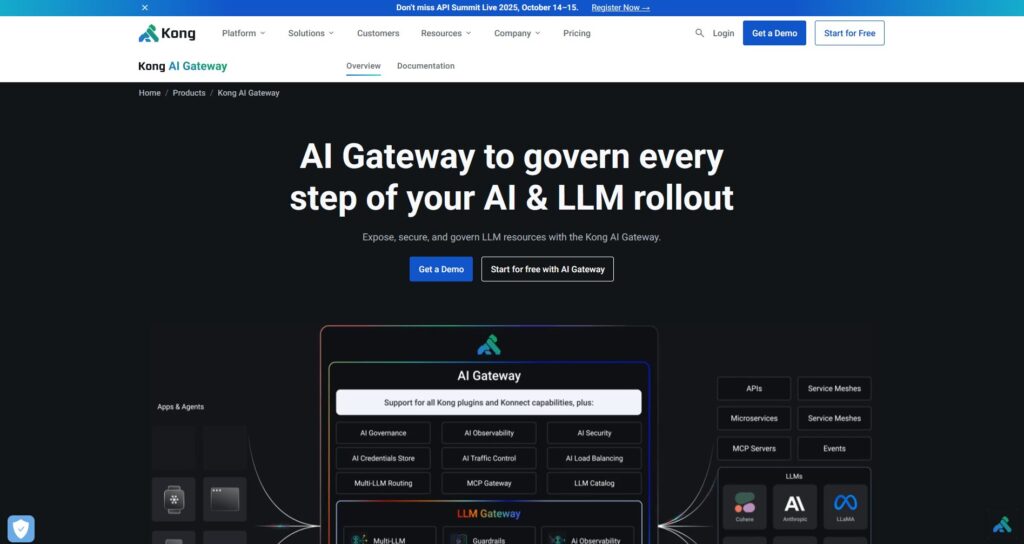

#2 — Kong AI Gateway

What it is. Enterprise AI/LLM gateway—governance, policies/plugins, analytics, and observability at the edge. A control plane rather than a marketplace.

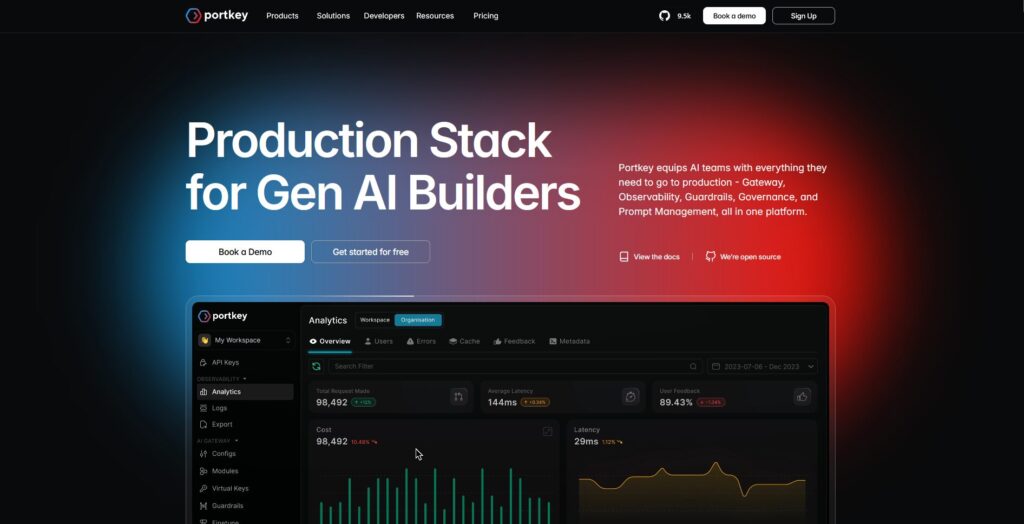

#3 — Portkey

What it is. AI gateway emphasizing guardrails and deep observability, common in regulated industries.

#4 — OpenRouter

What it is. Unified API over many models; great for fast experimentation across a wide catalog.

#5 — Eden AI

What it is. Aggregates LLMs and broader AI (vision, translation, TTS); offers fallbacks/caching and batching.

#6 — LiteLLM

What it is. A lightweight Python SDK + self-hostable proxy that speaks an OpenAI-compatible interface to many providers.

#7 — Unify

What it is. Quality-oriented routing and evaluation to pick better models per prompt.

#8 — Orq AI

What it is. Orchestration/collaboration platform to move from experiments to production with low-code flows.

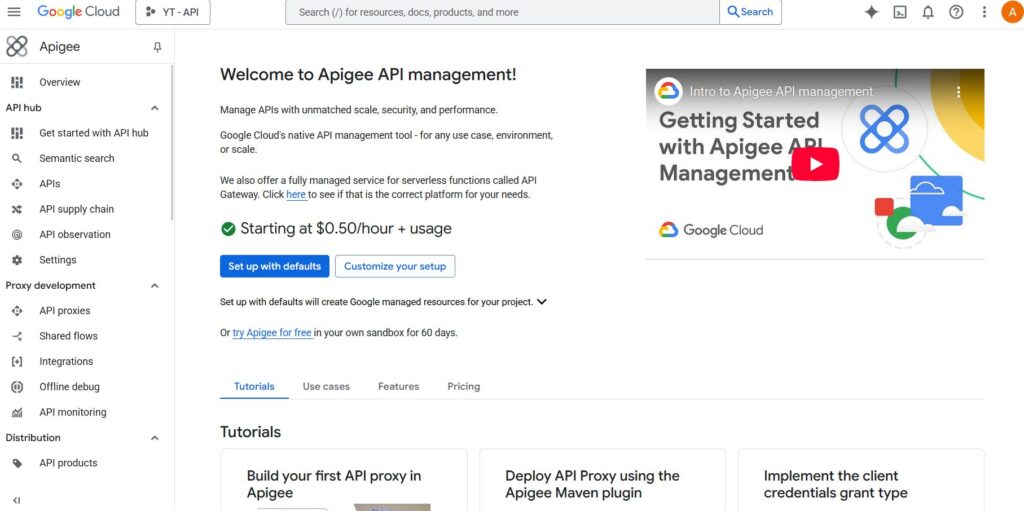

#9 — Apigee (with LLMs behind it)

What it is. Mature API management/gateway you can place in front of LLM providers to apply policies, keys, and quotas.

#10 — NGINX

What it is. DIY control: build custom routing, token enforcement, and caching for LLM backends if you prefer hand-rolled policies.

WSO2 vs ShareAI (at a glance)

- If you need one API across many providers with transparent pricing/latency/uptime and instant failover, choose ShareAI.

- If your top requirement is egress governance—centralized credentials, policy enforcement, and observability—WSO2 fits that lane.

- Many teams pair them: gateway for org policy + ShareAI for marketplace routing.

Quick comparison

| Platform | Who it serves | Model breadth | Governance & security | Observability | Routing / failover | Marketplace transparency | Provider program |

|---|---|---|---|---|---|---|---|

| ShareAI | Product/platform teams needing one API + fair economics | 150+ models, many providers | API keys & per-route controls | Console usage + marketplace stats | Smart routing + instant failover | Yes (price, latency, uptime, availability, provider type) | Yes — open supply; 70% to providers |

| WSO2 | Teams wanting egress governance | BYO providers | Centralized credentials/policies | Metrics/tracing (gateway-first) | Conditional routing via policies | No (infra tool, not a marketplace) | n/a |

| Kong AI Gateway | Enterprises needing gateway-level policy | BYO | Strong edge policies/plugins | Analytics | Proxy/plugins, retries | No (infra) | n/a |

| Portkey | Regulated/enterprise teams | Broad | Guardrails & governance | Deep traces | Conditional routing | Partial | n/a |

| OpenRouter | Devs wanting one key across many models | Wide catalog | Basic API controls | App-side | Fallbacks | Partial | n/a |

| Eden AI | Teams needing LLM + other AI services | Broad | Standard controls | Varies | Fallbacks/caching | Partial | n/a |

| LiteLLM | DIY/self-host proxy | Many providers | Config/key limits | Your infra | Retries/fallback | n/a | n/a |

| Unify | Quality-driven teams | Multi-model | Standard API security | Platform analytics | Best-model selection | n/a | n/a |

| Orq | Orchestration-first teams | Wide support | Platform controls | Platform analytics | Orchestration flows | n/a | n/a |

| Apigee / NGINX | Enterprises / DIY | BYO | Policies | Add-ons/custom | Custom | n/a | n/a |

Pricing & TCO: compare real costs (not just unit prices)

Raw $/1K tokens can hide the real picture. Your TCO shifts with retries/fallbacks, latency (which affects usage), provider variance, observability storage, and evaluation runs. A transparent marketplace helps you choose routes that balance cost and UX.

TCO ≈ Σ (Base_tokens × Unit_price × (1 + Retry_rate))

+ Observability_storage

+ Evaluation_tokens

+ Egress- Prototype (~10k tokens/day): Optimize for time-to-first-token. Use the Open Playground and quickstarts.

- Mid-scale (~2M tokens/day): Marketplace-guided routing/failover can trim 10–20% while improving UX.

- Spiky workloads: Expect higher effective token costs from retries during failover; budget for it.

Migration guide: moving to ShareAI

From WSO2

Keep gateway-level policies where they shine; add ShareAI for marketplace routing + instant failover. Pattern: gateway auth/policy → ShareAI route per model → measure marketplace stats → tighten policies.

From OpenRouter

Map model names, verify prompt parity, then shadow 10% of traffic and ramp 25% → 50% → 100% as latency/error budgets hold. Marketplace data makes provider swaps straightforward.

From LiteLLM

Replace the self-hosted proxy on production routes you don’t want to operate; keep LiteLLM for dev if desired. Compare ops overhead vs managed routing benefits.

From Unify / Portkey / Orq / Kong

Define feature-parity expectations (analytics, guardrails, orchestration, plugins). Many teams run hybrid: keep specialized features where they’re strongest; use ShareAI for transparent provider choice and failover.

Developer quickstart (copy-paste)

Use an OpenAI-compatible surface. Replace YOUR_KEY with your ShareAI key—get one at Create API Key. See the API Reference for details.

#!/usr/bin/env bash

# cURL — Chat Completions

# Prereqs:

# export SHAREAI_API_KEY="YOUR_KEY"

curl -X POST "https://api.shareai.now/v1/chat/completions" \

-H "Authorization: Bearer $SHAREAI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "llama-3.1-70b",

"messages": [

{ "role": "user", "content": "Give me a short haiku about reliable routing." }

],

"temperature": 0.4,

"max_tokens": 128

}'// JavaScript (fetch) — Node 18+/Edge

// Prereqs:

// process.env.SHAREAI_API_KEY = "YOUR_KEY"

async function main() {

const res = await fetch("https://api.shareai.now/v1/chat/completions", {

method: "POST",

headers: {

"Authorization": `Bearer ${process.env.SHAREAI_API_KEY}`,

"Content-Type": "application/json"

},

body: JSON.stringify({

model: "llama-3.1-70b",

messages: [

{ role: "user", content: "Give me a short haiku about reliable routing." }

],

temperature: 0.4,

max_tokens: 128

})

});

if (!res.ok) {

console.error("Request failed:", res.status, await res.text());

return;

}

const data = await res.json();

console.log(JSON.stringify(data, null, 2));

}

main().catch(console.error);# Python — requests

# Prereqs:

# pip install requests

import os

import json

import requests

API_KEY = os.environ.get("SHAREAI_API_KEY", "YOUR_KEY")

url = "https://api.shareai.now/v1/chat/completions"

payload = {

"model": "llama-3.1-70b",

"messages": [

{"role": "user", "content": "Give me a short haiku about reliable routing."}

],

"temperature": 0.4,

"max_tokens": 128

}

headers = {

"Authorization": f"Bearer {API_KEY}",

"Content-Type": "application/json"

}

resp = requests.post(url, headers=headers, json=payload)

print(resp.status_code)

print(json.dumps(resp.json(), indent=2))Security, privacy & compliance checklist (vendor-agnostic)

- Key handling: rotation cadence; minimal scopes; environment separation.

- Data retention: where prompts/responses are stored; duration; redaction defaults.

- PII & sensitive content: masking; access controls; regional routing for data locality.

- Observability: prompt/response logging; ability to filter/pseudonymize; propagate trace IDs consistently.

- Incident response: escalation paths and provider SLAs.

FAQ — WSO2 alternatives & comparison matchups

WSO2 vs ShareAI — which for multi-provider routing?

ShareAI. It’s built for marketplace transparency (price, latency, uptime, availability, provider type) and smart routing/failover across many providers. WSO2 is a governance tool (centralized credentials/policy; gateway-first observability). Many teams use both.

WSO2 vs Kong AI Gateway — who’s stronger on edge policy?

Both are gateways; Kong is known for a deep plugin ecosystem and edge policies, while WSO2 aligns closely with API-management workflows. If you also want pre-route transparency and instant failover, layer in ShareAI.

WSO2 vs Portkey — governance and guardrails?

Portkey emphasizes guardrails and tracing depth; WSO2 offers policy-driven governance. For provider-agnostic choice with marketplace stats and automatic failover, add ShareAI.

WSO2 vs OpenRouter — marketplace breadth or gateway control?

OpenRouter offers a broad model catalog; WSO2 centralizes policy. If you want breadth + resilience with live marketplace metrics, ShareAI combines multi-provider routing with transparent pre-route data.

WSO2 vs Orq — orchestration vs egress?

Orq helps orchestrate workflows; WSO2 governs egress. Keep your orchestration where it shines and use ShareAI for provider-agnostic routing with a market view.

Try ShareAI next

- Open Playground — test models live.

- Create your API key — start integrating in minutes.

- Browse Models — compare price, latency, uptime, availability, provider type.

- Docs Home · User Guide · Releases

- Ready to join? Sign in or Sign up